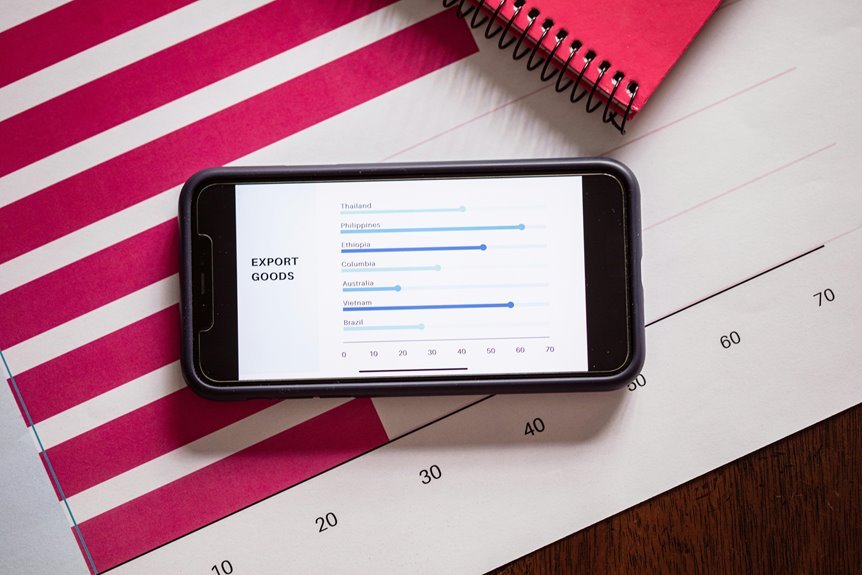

Smart Models 7814529000 translate complex data into actionable insights for real-world evaluation. They balance architecture choices to meet latency and throughput targets. Deployment and monitoring anchor predictable costs and reproducibility. Transparency, auditable provenance, and access governance support control and rollback capabilities. Continuous improvement with governance sustains performance from edge to cloud. The interplay of labeling rigor and governance frames reliability, but gaps remain that warrant careful scrutiny before broader adoption.

What Smart Models 7814529000 Do for Real-World Problems

Smart Models 7814529000 address real-world problems by translating complex data into actionable insights across diverse domains. They enable systematic model evaluation, ensuring reliability and fairness. Data labeling rigor underpins accuracy, while governance frameworks protect accountability and compliance. Latency considerations affect responsiveness, and scalability determines sustained performance under growing workloads, guiding deployment, monitoring, and continuous improvement with disciplined, measurable procedures.

Choosing the Right Architecture for Your Use Case

When selecting an architecture, practitioners must align system capabilities with the specific use case requirements, balancing factors such as latency, throughput, data volume, and deployment context.

The analysis identifies suitable architecture patterns based on data flow, fault tolerance, and scalability, while considering deployment considerations like edge versus cloud, security, and maintenance.

Choice should maximize flexibility, observability, and resource efficiency.

Deploying and Monitoring Smart Models in Production

The discussion emphasizes Deployment metrics and Latency budgets, ensuring observable performance and predictable costs.

Model governance formalizes oversight, auditing, and reproducibility, while Feature pipelines structure data flow, validation, and feature stability.

Together, these practices sustain reliability, scalability, and freedom to iterate responsibly.

Mitigating Risks: Transparency, Control, and Best Practices

Mitigating risks in smart-model deployments centers on transparency, governance, and disciplined practices that make outcomes observable and controllable.

The discussion outlines transparency guidelines that clarify data provenance, model intent, and performance boundaries, enabling independent assessment.

It also enumerates control mechanisms for access, auditing, and rollback, ensuring accountability, safety, and measurable alignment with stated objectives and user autonomy.

Conclusion

In a sequence of unlikely coincidences, the model’s outputs mirror real-world constraints: latency aligns with user flow, accuracy with labeled truth, and governance with audit trails. The architecture choices quietly reflect deployment context, like a map that folds to fit each journey. Monitoring reveals drift as a held-back forecast suddenly aligns with fresh data, while rollback options stand ready, invisible yet steadfast. Together, these serendipitous alignments form a disciplined, transparent path from insight to reliable action.